Table of Contents

Software

The ORBIT Radio Grid Testbed is operated as a shared service to allow a number of projects to conduct wireless network experiments on-site or remotely. Although only one experiment can run on the testbed at a time, automating the use of the testbed allows each one to run quickly, saving the results to a database for later analysis.

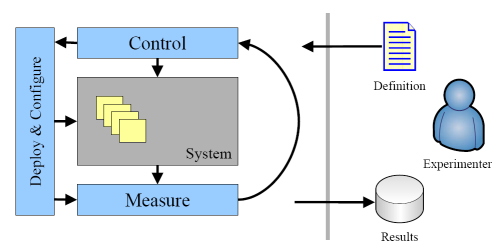

In other words, Orbit may be viewed as a set of services into which one inputs an experimental definition and one receives the experimental results as output as illustrated in Figure 1 below. The experimental definition is a script that interfaces to the ORBIT Services. These services can reboot each of the nodes in the 20x20 grid, then load an operating system, any modified system software and application software on each node, then set the relevant parameters for the experiment in each grid node and in each non-grid node needed to add controlled interference or monitor traffic and interference. The script also specifies the filtering and collection of the experimental data and generates a database schema to support subsequent analysis of that data.

Figure 1. Experiment Support Architecture

Experiment Control

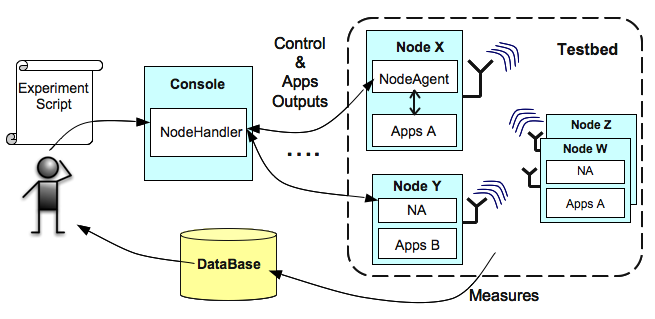

The main component of the Experiment Management Service is the Node Handler that functions as an Experiment Controller. It multicasts commands to the nodes at the appropriate time and keeps track of their execution. The Node Agent software component resides on each node, where it listens and executes the commands from the Node Handler. It also reports information back to the Node Handler. The combination of these two components gives the user the controls over the testbed, and enables the automated collection of experimental results. Because the Node Handler uses a rule-based approach to monitoring and controlling experiments, occasional feedback from experimenters may be required to fine tune its operation. Figure 4 illustrates the execution of an experiment from the user's point-of-view.

Finally, using the Node Handler (via a dedicated image nodesexperiment, which will be described later), the user can quickly load hard disk images onto the nodes of his/her experiment. This imaging process allows different groups of nodes to run different OS images. It relies on a scalable multicast protocol and the operation of a disk-loading Frisbee server from M. Hibler et al. (link). Similarly, the user can also use the Node Handler save the image of a node's disk into an archive file.

The user can perform all these actions on the testbed(s) via the generic command omf, which is the access point to control the Node Handler, his/her experiment, and the nodes on the testbed(s).

# To see a list of available commands omf help # To see the usage and more help about a particular command, e.g. the "load" command omf help load

Figure 4. Execution of an Experiment from a User's point-of-view

Measurement & Result Collection

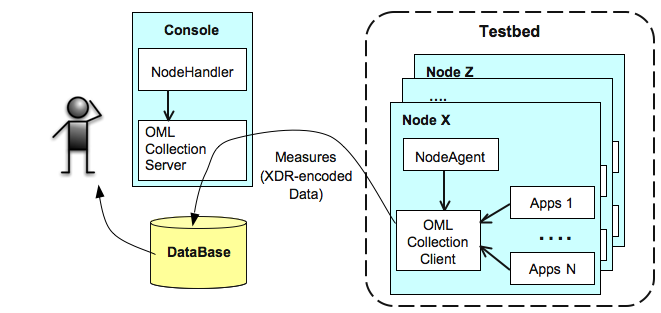

The ORBIT Measurement Framework & Library (OML) is responsible for collecting the experimental results. It is based on a client/server architecture as illustrated in Figure 5 below.

One instance of an OML Collection Server is started by the Node Handler for a particular experiment execution. This server will listen and collect experimental results from the various nodes involved in the experiment. It uses an SQL database for persistent data archiving of these results.

On each experimental node, one OML Collection Client is associated with each experimental applications. The details and "How-To" of such association will be presented in a following part of this tutorial. In the context of this introduction to the testbed, the client-side measurement collection can be viewed as follows. The application will forward any required measurements or outputs to the OML collection client. This OML client will optionally apply some filter/processing to these measurements/outputs, and then sends them to the OML Collection Server (currently over one multicast channel per experiment for logical segregation of data and for scalability)

There are two alternative methods for the user to interface their experimental applications with the OML Collection Clients and to define the requested measurement points and parameters. These methods and measurement definitions will be presented in details later in this tutorial.

Figure 5. OML component architecture.

Finally, the ORBIT platform also provides the Libmac library. Libmac is a user-space C library that allows applications to inject and capture MAC layer frames, manipulate wireless interface parameters at both aggregate and per-frame levels, and communicate wireless interface parameters over the air on a per-frame level. Users can interface their experimental applications with Libmac to collect MAC layer measurements from their experiments. This other section of the documentation provides more information on Libmac and its operations.

Services

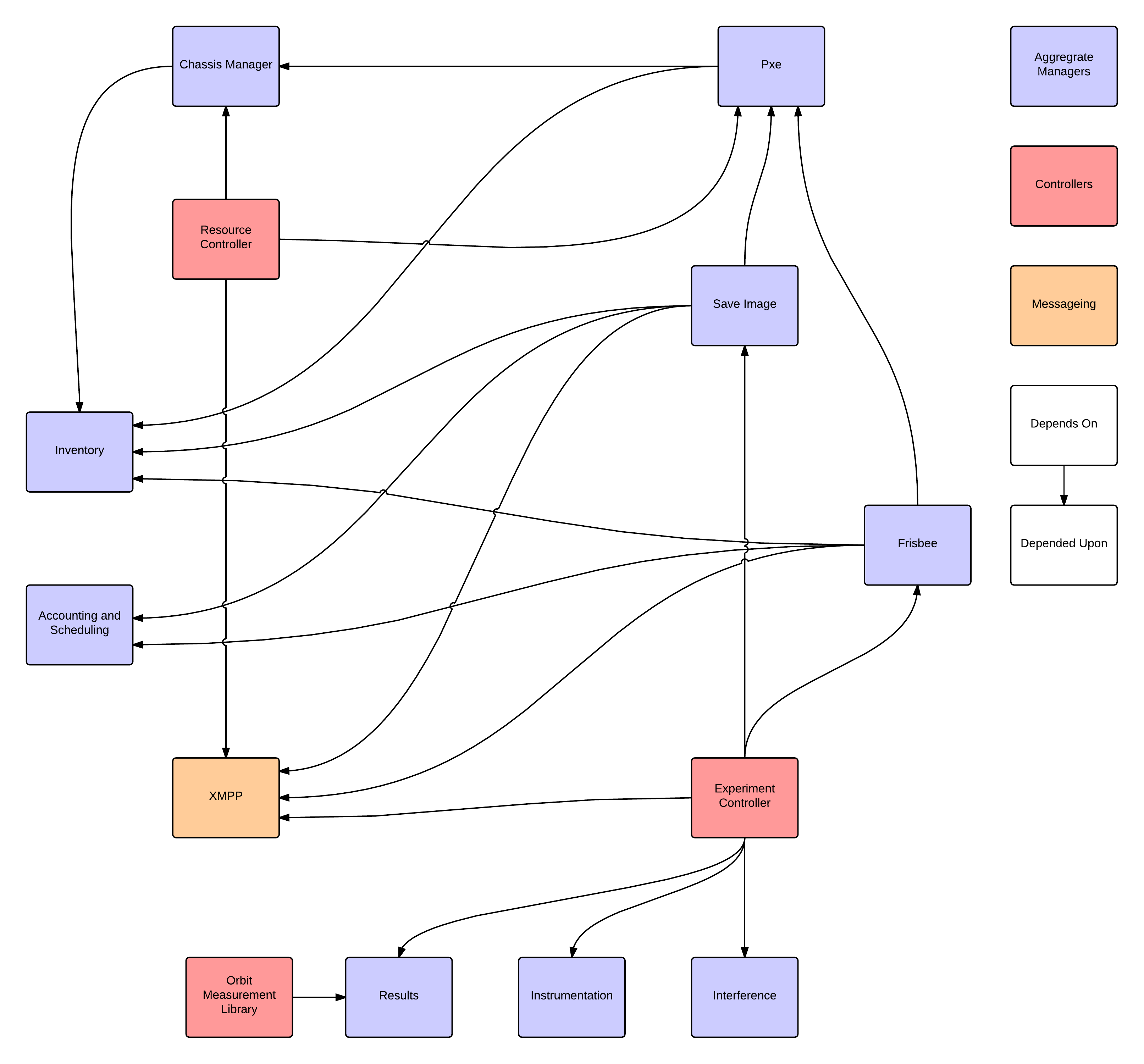

The Orbit Framework is orchestrated via a collection of interdependent services. These services control the flow of of an experiment. Due to the distributed nature of the Testbed, the services can (and often do) live on physically distant machines. The following graph shows the inter-dependency of orbit services:

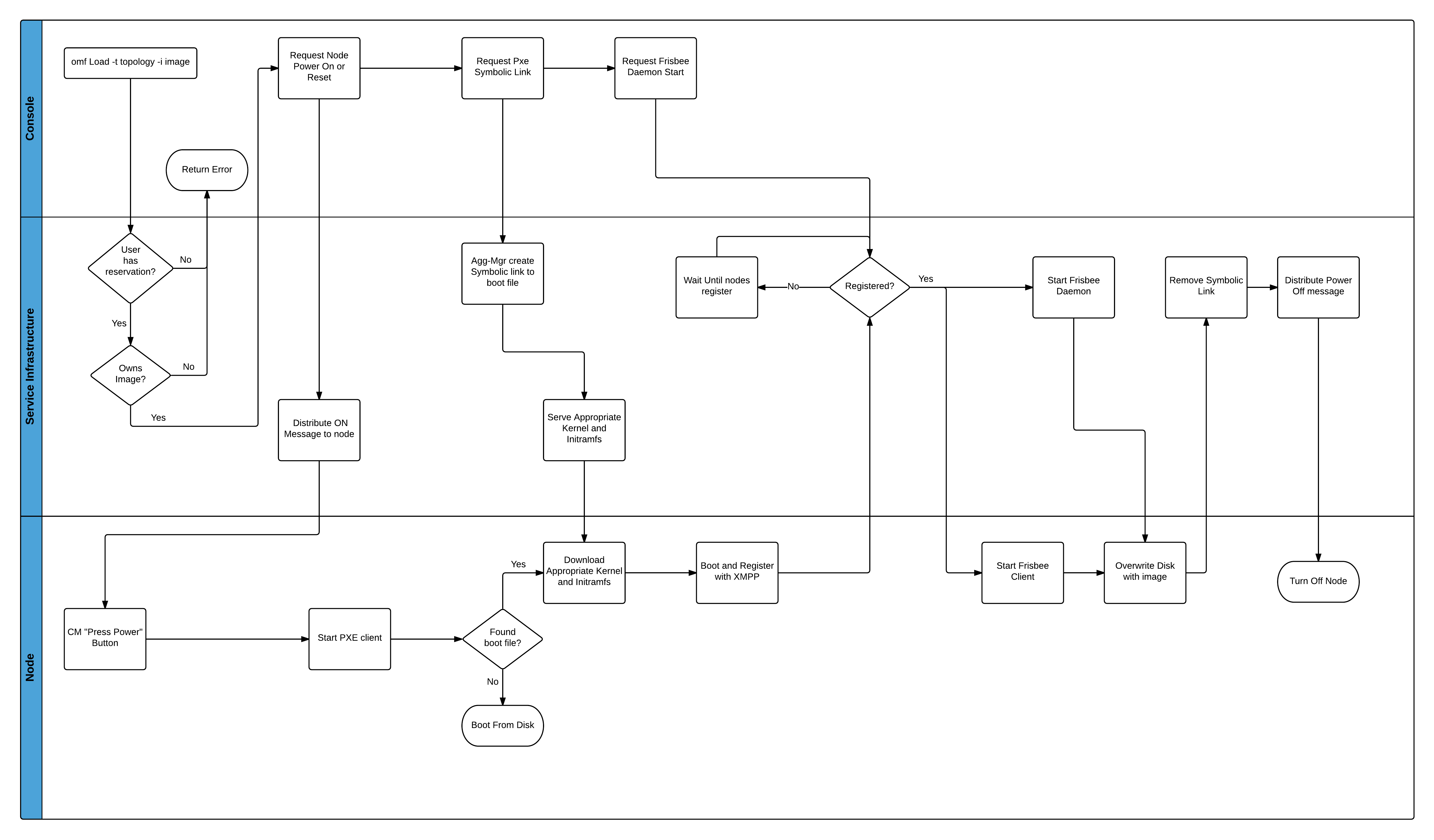

As an example of the flow of an experiment, we can consider the process for imaging the nodes. All experiments are some variation of this process. Consider:

As we can see from this graph, different phases of the experiments occur in different parts of the infrastructure. Some operations (e.g. compute, communicate, overwrite disk) are done on the nodes them selves. Others are initiated from the console (they entry point of user commands). Much of the expirment orchestration happens withing the service infrastructure.

Attachments (7)

- architecture-50.png (26.7 KB ) - added by 21 years ago.

- node-50.png (41.3 KB ) - added by 21 years ago.

- oml-50.png (40.3 KB ) - added by 21 years ago.

- OMF-User-View.png (55.5 KB ) - added by 19 years ago.

- OML-overview.png (45.4 KB ) - added by 19 years ago.

-

Service Dependence - New Page.png

(121.0 KB

) - added by 11 years ago.

Service Depebendency

-

Image Process - New Page.png

(162.4 KB

) - added by 11 years ago.

Image Process FLow

Download all attachments as: .zip