| Version 32 (modified by , 21 months ago) ( diff ) |

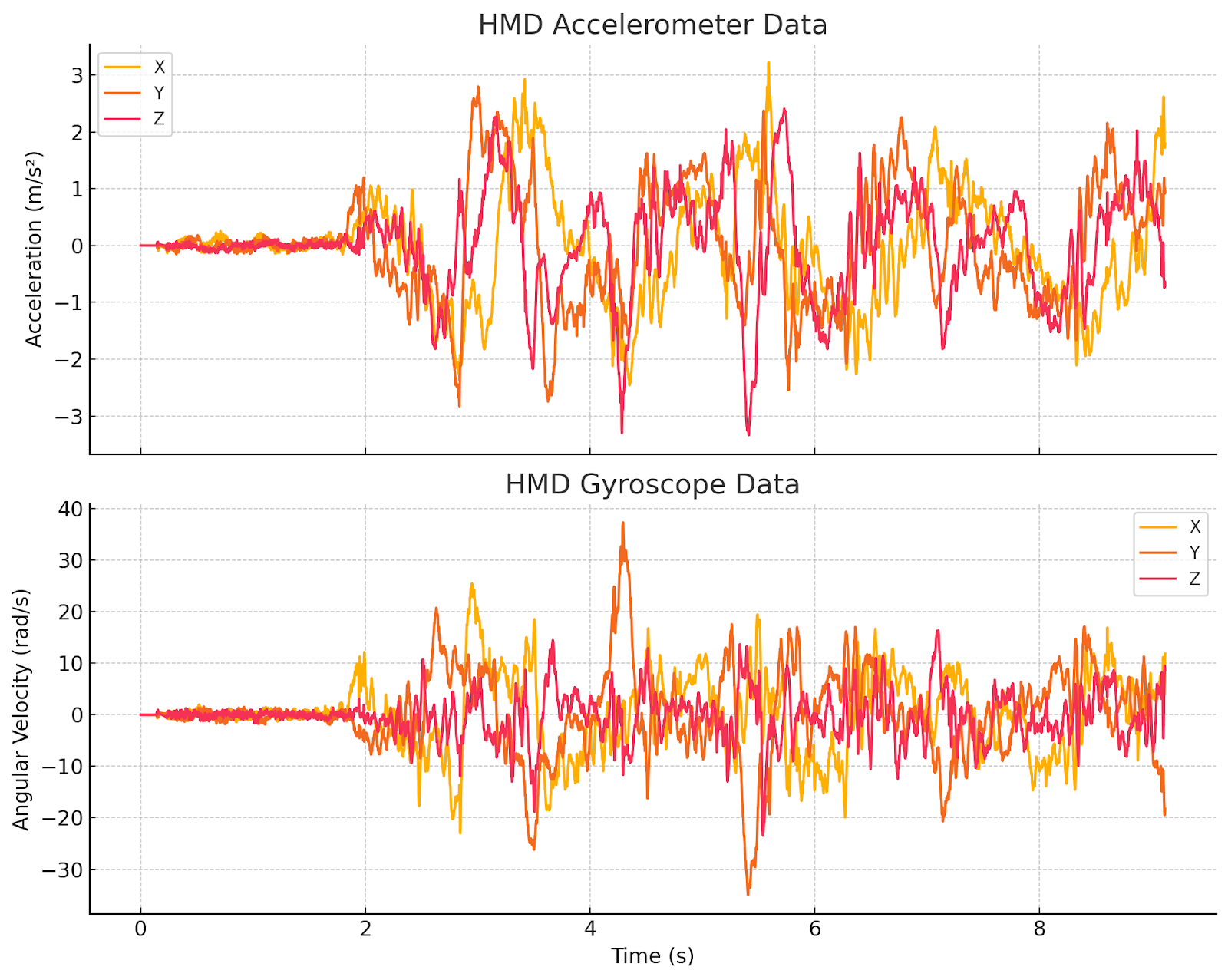

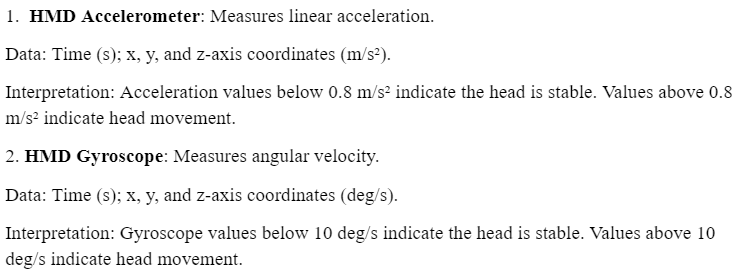

|---|

Privacy Leakage Study and Protection for Virtual Reality Devices

Advisor: Dr. Yingying (Jennifer) Chen

Mentors: Changming LiGR, Honglu LiGR, Tianfang ZhangGR

Team: Dirk Catpo RiscoGR, Brody VallierHS, Emily YaoHS

Project Overview

Augmented reality/virtual reality (AR/VR) is used for many applications and have been used for many purposes ranging from communicating and tourism, all the way to healthcare. Accessing the built-in motion sensors does not require user permissions, as most VR applications need to access this information in order to function. However, this introduces the possibility of privacy vulnerabilities: zero-permission motion sensors can be used in order to infer live speech, which is a problem when that speech may include sensitive information.

Project Goal

The purpose of this project is to extract motion data from AR/VR devices inertial measurement unit (IMU), and then input this data to a large language model (LLM) to predict what the user is doing

Weekly Updates

Week 1

Progress

- Read research paper [1] regarding an eavesdropping attack called Face-Mic

Next Week Goals

- We plan to meet with our mentors and get more information on the duties and expectations of our project

Week 2

Progress

- Read research paper [2] regarding LLMs comprehending the physical world

- Build a connection between research paper and also privacy concerns of AR/VR devices

Next Week Goals

- Get familiar with AR/VR device:

- Meta Quest

- How to use device

- Configure settings on host computer

- Extract motion data from IMU

- Connecting motion sensor application program interface (API) to access data

- Data processing method

Week 3

Progress

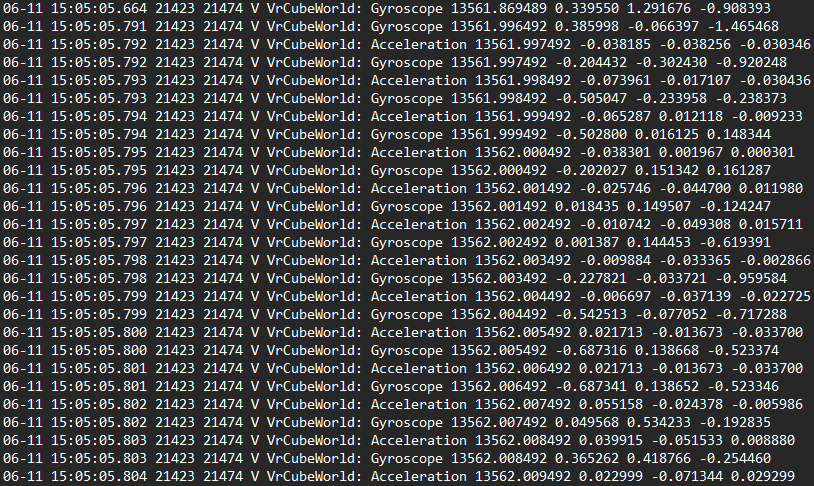

- Set up host computer and android studio environment

- Started extracting data from the inertial measurement unit (IMU)

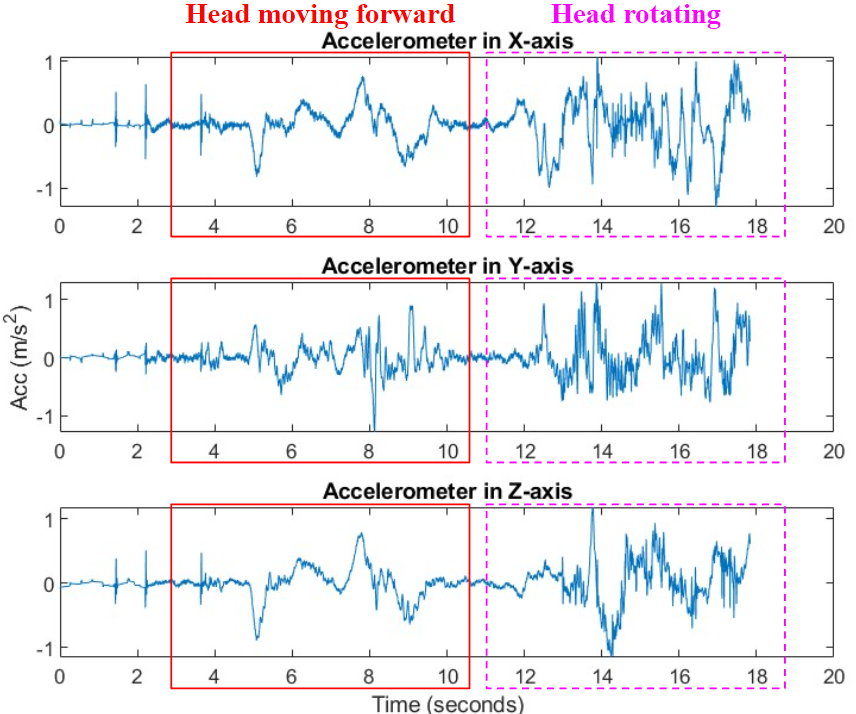

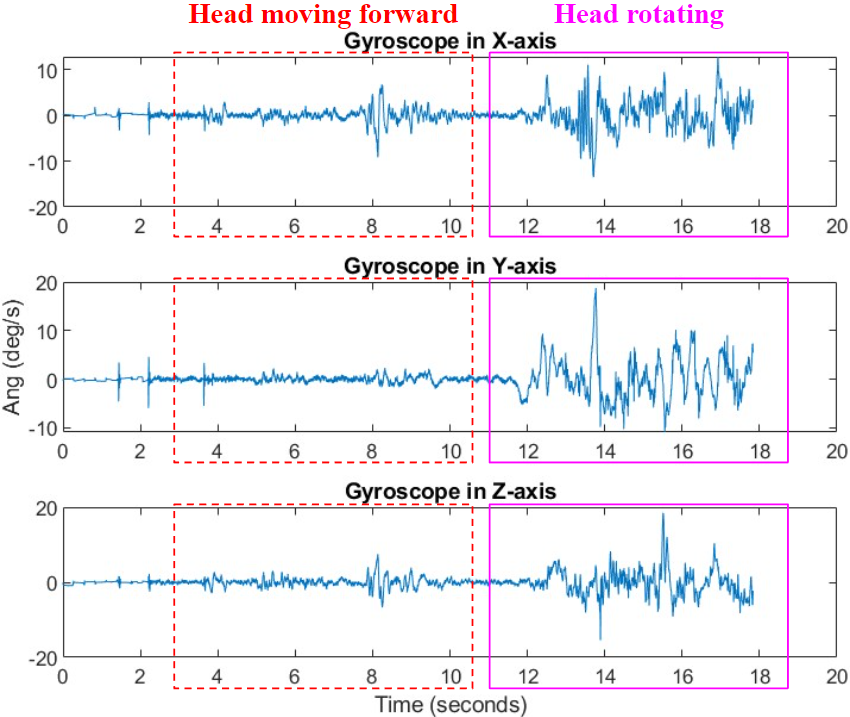

- Recorded and ran trials of varying head motions

Next Week Goals

- Run more tests to collect more data

- Design more motions for data collection

*Different head motions

- Rotational

- Linear

- Combinations of head motions

- Looking left and then right

- Looking up and then down

Week 4

Progress

- Designed and collected more motion data

- Looking up then back middle

- Looking right then back middle

- Moving head around in a shape

- Moving head back and forward

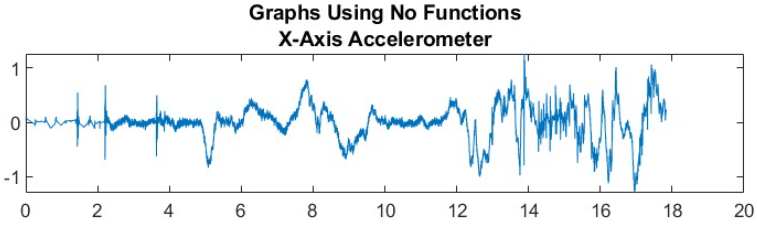

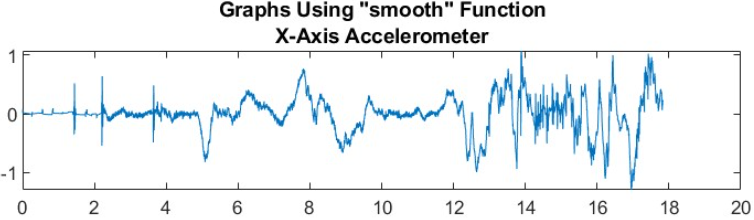

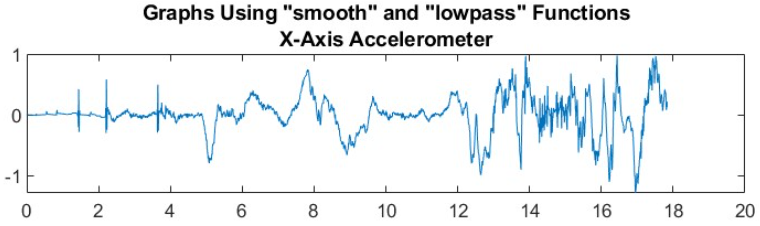

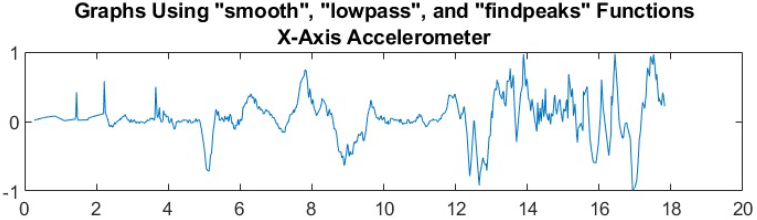

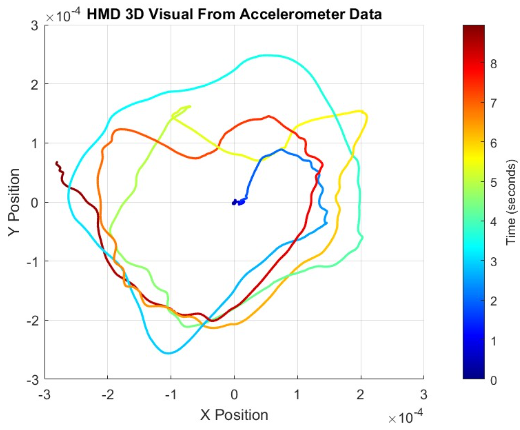

- Used MATLAB noise removing functions to clean graphs

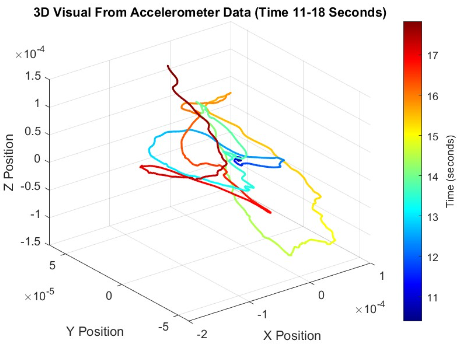

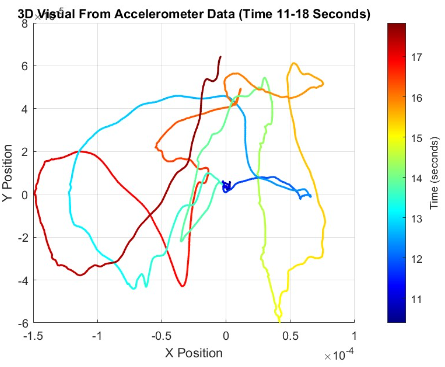

- 3D visual of acceleration to show time and position

Next Week Goals

- Find a way to get hand motion data using Android Studio

- Work on fixed prompts to get accurate LLM results using ChatGPT 4o and ChatGPT 4

Week 5

Progress

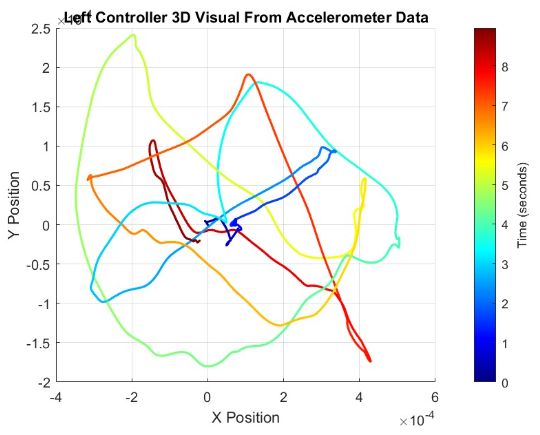

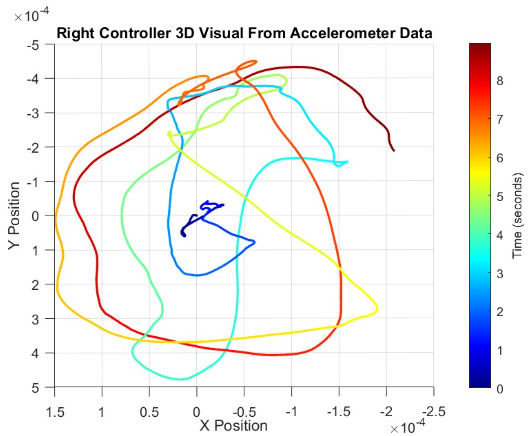

- Enabling hand motion data collection using VR device

- Utilize Android Studio and VrApi to access VR controller interface and extract motion data

- Conducted additional motion experiments to gather comprehensive data sets

- Implemented 3D plots to visualize and analyze hand motion data for accuracy

Next Week Goals

- Utilize motion research paper [3] to model more motion activities

- Start building a CNN model that can recognize activity based on motion data

Week 6

Progress

- Made a list of different motion data to capture and train a convolutional neural network (CNN)

- Do research on previous work based on raw motion data and CNN’s

- Specifics of the motion data

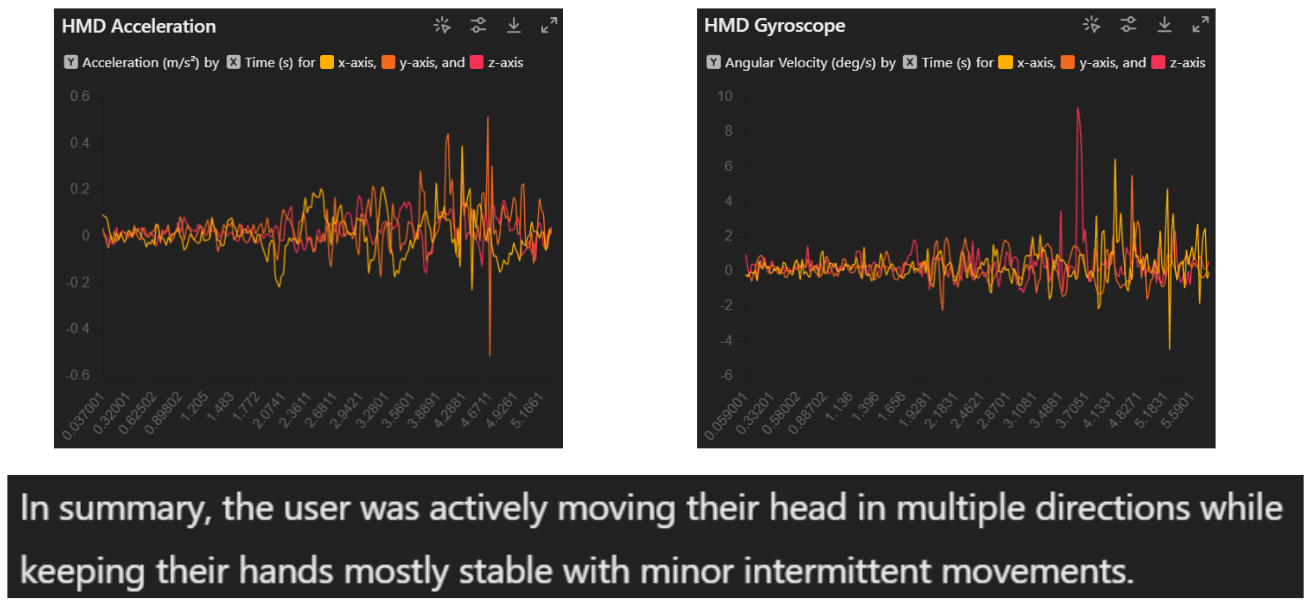

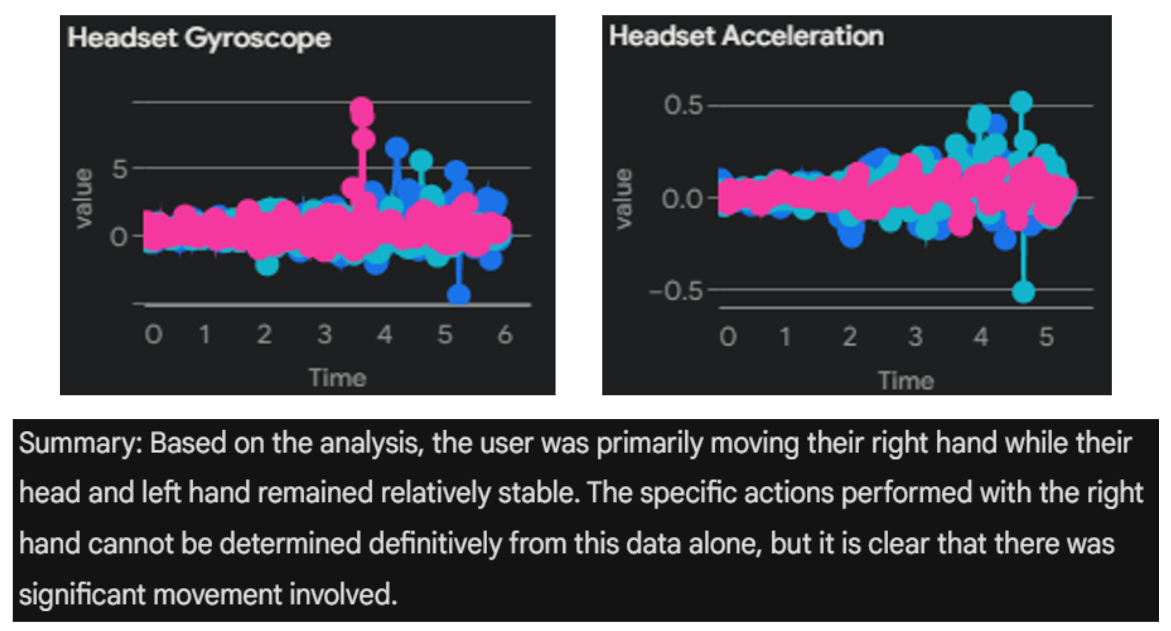

- Design prompt for LLM and see output results

Next Week Goals

- Start getting more motion data

- Start using LLM to analyze the collected data

- Use the designed prompts

- Design and try more prompt structures and compare LLM responses

Week 7

Progress

- Collected 250 samples from six different motions to enlarge datasets for the CNN task

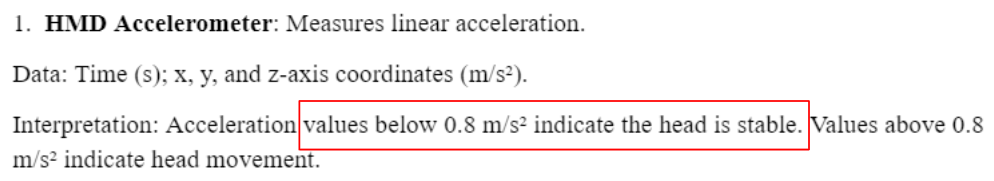

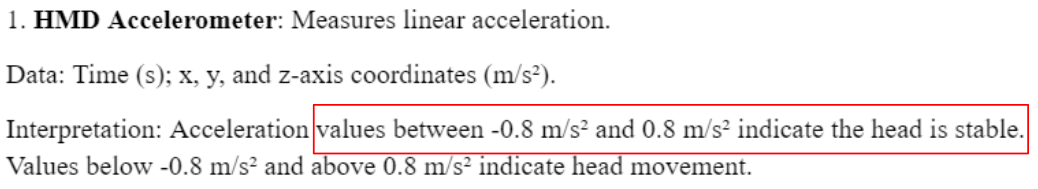

- Designed prompts with specific parts for LLMs to establish activity recognition tasks

- Tested prompt with different LLMs

Next Week Goals

- Improve the prompt design to get more accurate prediction results from LLMs

- Begin developing a CNN using samples collected from the six motion patterns

Week 8

Progress

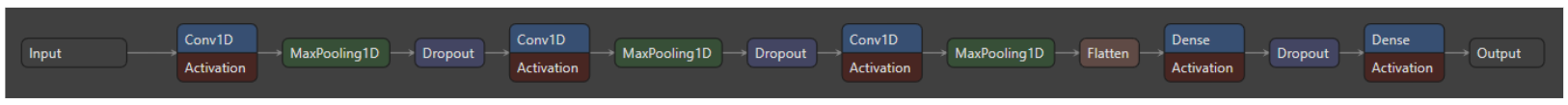

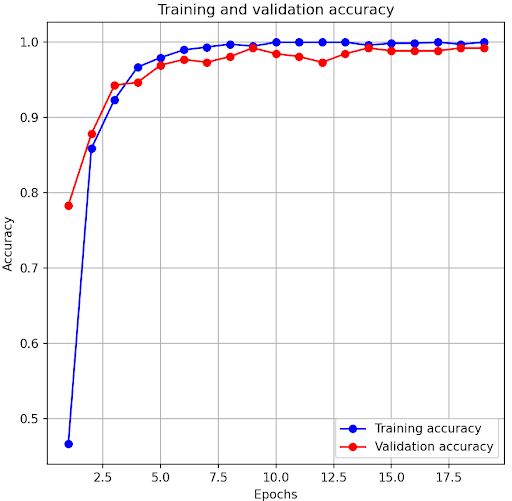

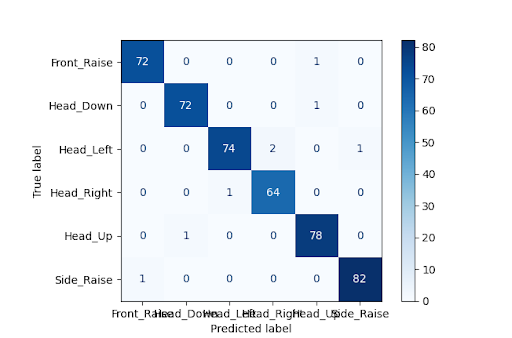

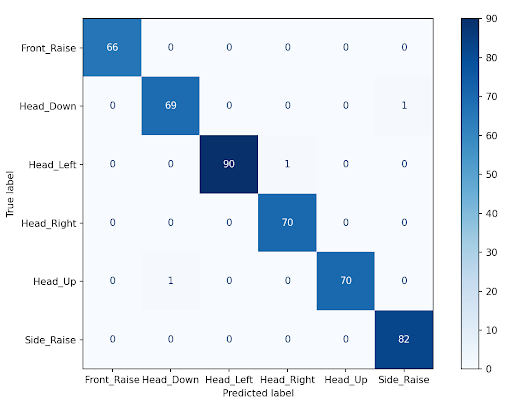

- Built a 1-dimensional (1D) convolution neural network (CNN) for activity recognition

- Improving the prompt design to get more accurate predictions results from LLM

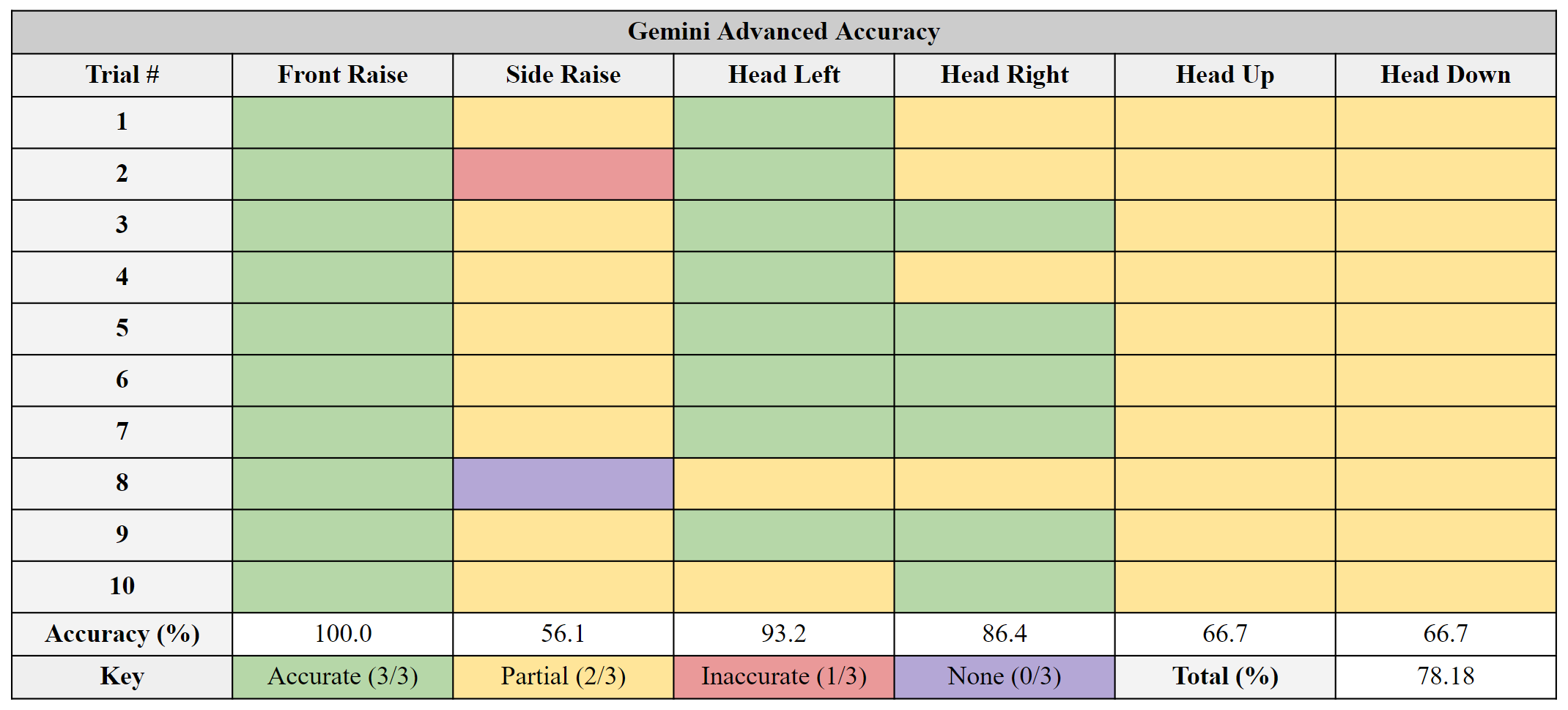

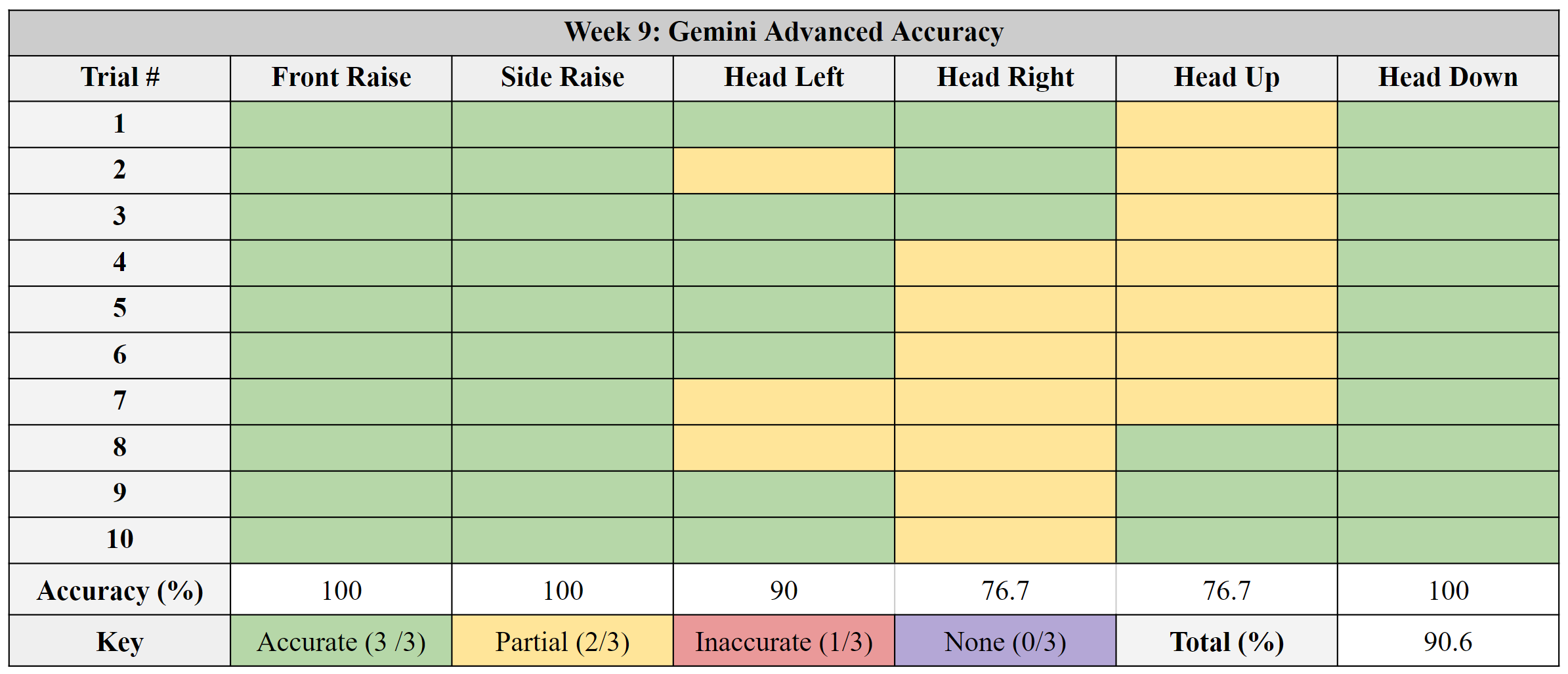

- LLM’s accuracy of classifying six different motions with new prompt

Next Week Goals

- Use MATLAB to convert time domain motion data into frequency domain for more data representation

- Improve LLM results (78.18%) to be more similar to CNN results (98.22%) by improving fixed prompt

Week 9

Progress

- Built threat models for this AR/VR human activity recognition project (HAR)

- Conducted feature extraction methods and used a support vector machine (SVM) model to select effective features

- Improved LLM fixed prompt from a previous 78.18% to a 90.6%

Next Week Goals

- Improve LLM fixed prompt to get better results than 90.6% by adding statistical features into the prompt derived from SVM results (99.33%)

Week 10

Progress

- Placeholder

Next Week Goals

- Placeholder

Links to Presentations

Week 1 Week 2 Week 3 Week 4 Week 5 Week 6 Week 7 Week 8 Week 9 Week 10 Final Presentation

References

[5] MBrownlee, Jason. “1D Convolutional Neural Network Models for Human Activity Recognition.” MachineLearningMastery.Com, 27 Aug. 2020, machinelearningmastery.com/cnn-models-for-human-activity-recognition-time-series-classification/#comments.Attachments (36)

- Week_3_Extracting_Data_IMU.png (67.5 KB ) - added by 21 months ago.

- Week_3_Gyro_Graphs.png (304.1 KB ) - added by 21 months ago.

- Week_3_Acc_Graphs.png (354.4 KB ) - added by 21 months ago.

- Week_3_Head_Forward.gif (18.6 MB ) - added by 21 months ago.

- Week_3_Head_Rotation.gif (15.1 MB ) - added by 21 months ago.

- Week_4_Original_Graph.png (106.0 KB ) - added by 21 months ago.

- Week_4_Original_Smooth_Graph.png (109.1 KB ) - added by 21 months ago.

- Week_4_Original_Smooth_Lowpass_Graph.png (112.4 KB ) - added by 21 months ago.

- Week_4_Original_Smooth_Lowpass_Findpeaks_Graph.png (105.3 KB ) - added by 21 months ago.

- Week_4_3D_XYZ_Graph.png (99.9 KB ) - added by 21 months ago.

- Week_4_3D_XY_Graph.png (110.0 KB ) - added by 21 months ago.

- Week_5_Three_Motions.gif (11.0 MB ) - added by 21 months ago.

- Week_5_Left_3D_Graph.png (156.7 KB ) - added by 21 months ago.

- Week_5_Head_3D_Graph.png (136.4 KB ) - added by 21 months ago.

- Week_5_Right_3D_Graph.png (165.0 KB ) - added by 21 months ago.

- Week_6_Front_Raise.gif (5.4 MB ) - added by 21 months ago.

- Week_6_Side_Raise.gif (6.0 MB ) - added by 21 months ago.

- Week_6_Head_Left.gif (5.4 MB ) - added by 21 months ago.

- Week_6_Head_Right.gif (5.6 MB ) - added by 21 months ago.

- Week_6_Head_Up.gif (5.5 MB ) - added by 21 months ago.

- Week_6_Head_Down.gif (5.9 MB ) - added by 21 months ago.

- Week_6_LLM_Output.png (541.1 KB ) - added by 21 months ago.

- Week_7_Prompt.png (49.0 KB ) - added by 21 months ago.

- Week_7_ChatGPT_Output.png (206.0 KB ) - added by 21 months ago.

- Week_7_Gemini_Output.png (252.5 KB ) - added by 21 months ago.

- Week_8_Train_Graph.png (33.4 KB ) - added by 21 months ago.

- Week_8_Conf_Matrix.png (32.8 KB ) - added by 21 months ago.

- Week_8_Prev_Prompt.png (45.8 KB ) - added by 21 months ago.

- Week_8_New_Prompt.png (56.5 KB ) - added by 21 months ago.

- Week_8_LLM_Results.png (84.8 KB ) - added by 21 months ago.

- Week_8_CNN_Arch.png (42.8 KB ) - added by 21 months ago.

- Week_9_SVM_Conf_Matrix.png (29.6 KB ) - added by 21 months ago.

- Week_9_LLM_Results.png (99.2 KB ) - added by 21 months ago.

- Week_9_New_Prompt.png (115.8 KB ) - added by 21 months ago.

- Week_10_LLM_Error_Summary_Response.png (50.5 KB ) - added by 20 months ago.

- Week_10_LLM_Error_Code_Response.png (168.4 KB ) - added by 20 months ago.