| Version 18 (modified by , 3 years ago) ( diff ) |

|---|

Robotic IoT Smartspace Testbed

Robotic IoT SmartSpace Testbed

WINLAB Summer Internship 2023

Group Members: Jeremy Hui, Katrina Celario, Julia Rodriguez, Laura Liu, Jose Rubio, Michael Cai

Project Overview

The main purpose of the project is to focus on the Internet of Things (IoT) and its transformative potential when intertwined with Machine Learning (ML). To explore this subject, the group continues the work of the SenseScape Testbed, an IoT experimentation platform for indoor environments containing a variety of sensors, location-tracking nodes, and robots. This testbed enables IoT applications, such as but not limited to, human activity and speech recognition and indoor mobility tracking. In addition, this project advocates for energy efficiency, occupant comfort, and context representation. The SenseScape Testbed provides an adaptable environment for labelling and testing advanced ML algorithms centered around IoT.

Project Goals

Previous Groups Work: https://dl.acm.org/doi/abs/10.1145/3583120.3589838

Based on the future research section, there are two main goals the group wants to accomplish.

The first goal is to create a website that includes both real-time information on the sensors and a reservation system for remote access to the robot. For the sensors, the website should display the name of the sensor, whether it is online and the most recent time it was seen gathering data, and the actual continuous data streaming in. For the remote reservation/experimentation features, the website must be user-friendly so that not only is it easy for the user to execute commands, but also restricts them from changing things that they shouldn’t have access to. The group strives to allow remote access to the LoCoBot through ROS (Robotic Operating System) and SSH (Secure Shell) as long as all machines involved are connected to the same network (VPN).

The second goal is automating the labeling process of the activity within the environment using the natural language descriptions of the data provided in video format. The video auto-labeling can be done using neural networks (ex: CNN and LSTM) in an encoder-decoder architecture for both feature extraction and the language model. For activities that cannot be classified within a specific amount of certainty, the auto-labeling tool could save the time stamp and/or video clip and notify the user that it requires manual labeling. In the case the network is fully trained, it would simply choose the label with the highest probability and possibly mark that data as “uncertain”. The main goal is to connect this video data to the sensor data in hopes to bridge the gap between sensor-to-text.

Progress Overview

WEEK ONE

- Familiarized on project topics by reading relevant research papers

- Set up ROS (Robotic Operating System) on Ubuntu distro

- Ran elementary python scripts on robot (talker/listener)

- Interacted with robot at CoRE

WEEK TWO

- Started to build the website

- Learned the aspects of a Raspberry pi by starting with a blank SD:

→ Installed Raspberry Pi operating system and ROS

→ Connected to a ZeroTier network

→ Downloaded packages necessary for the data collecting python scripts

WEEK THREE

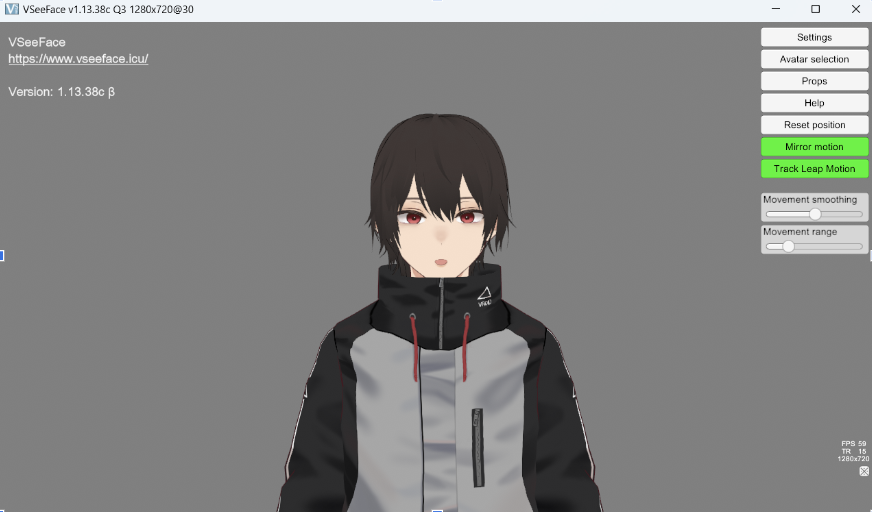

- Explored the possibility of including Unity in the project

→ Created 3D avatar in Unity which mimics live webcam feed

- Researched necessary equipment needed for experiments

- Started to clone original SD card that contained all python scripts, packages, and ROS onto blank SD cards

→ The cloned cards were inserted into 25 Raspberry Pi

→ Each pi was given a unique name

- Made significant progress on the website aspect

WEEK FOUR

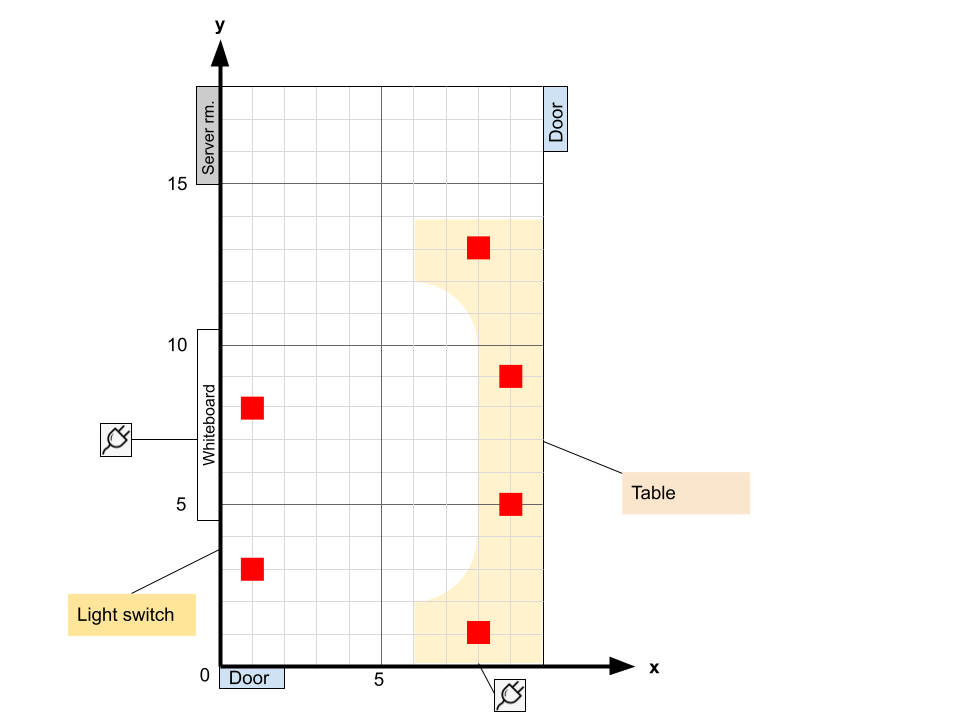

- Measured dimensions of WINLAB

WEEK FIVE

- Automated process of connecting & checking wifi connection (pinging)

- Created a tunnel between R-pi and data base

- Successfully created backend email sender & appointment form on website

- Added foundation for interactive grid on website

WEEK SIX

- Continued exploring Unity

→ Began connecting Unity/ROS - SLAM for robot navigation

- Set up remote desktop on orbit node for Unity

WEEK SEVEN

- Set up PTP on Pi's

→ Pi's do not have hardware timestamping → No IEEE 1588 , must aim Pi at boundary server → Solution: use software emulation → Build kernel that allows ptp4l to run

- Looked into using Raspberry Pi cameras for camera data

→ Successfully captured videos and viewed them using VLC

- Created the coordinate system for the test room

→ Discussed the predetermined activities and based layout on them & outlet placement

→ Turn on Light → Walk in/out of room

Attachments (19)

-

Screenshot 2023-07-05 124600.png

(264.1 KB

) - added by 3 years ago.

Digital Twin Week 3

-

Screenshot 2023-07-05 124811.png

(46.3 KB

) - added by 3 years ago.

Website Week 3

- winlab.png (575.9 KB ) - added by 3 years ago.

- winlab (1).png (104.5 KB ) - added by 3 years ago.

- raspberry-pi-camera-pinout-camera-2.png (133.9 KB ) - added by 3 years ago.

- Preliminary Floorplan - IoT Testbed.png (30.6 KB ) - added by 3 years ago.

-

Maestro.jpg

(554.3 KB

) - added by 3 years ago.

Maestro

-

Raspberry Pi 3 B+.jpeg

(104.3 KB

) - added by 3 years ago.

r-pi

-

Raspberry_Pi_Camera_Module_3_2.jpeg

(213.3 KB

) - added by 3 years ago.

camera

-

website_test.png

(221.3 KB

) - added by 3 years ago.

simple website

-

remote_desktop.png

(169.1 KB

) - added by 3 years ago.

desktop

- Point_Cloud_Unity - SampleScene - Windows, Mac, Linux - Unity 2022.3.4f1_ _DX11_ 2023-07-24 13-38-49.mp4 (26.1 MB ) - added by 3 years ago.

-

Screen Shot 2023-08-01 at 2.21.47 PM.png

(327.5 KB

) - added by 3 years ago.

database

- Screenshot 2023-07-31 160018-min.png (2.4 MB ) - added by 3 years ago.

- ptp.png (106.2 KB ) - added by 3 years ago.

- Screenshot 2023-08-07 144659.png (96.2 KB ) - added by 3 years ago.

- Screenshot 2023-08-07 145140.png (160.9 KB ) - added by 3 years ago.

- Screenshot 2023-08-07 145732.png (220.1 KB ) - added by 3 years ago.

- Screenshot 2023-08-07 145902.png (562.9 KB ) - added by 3 years ago.

.png)